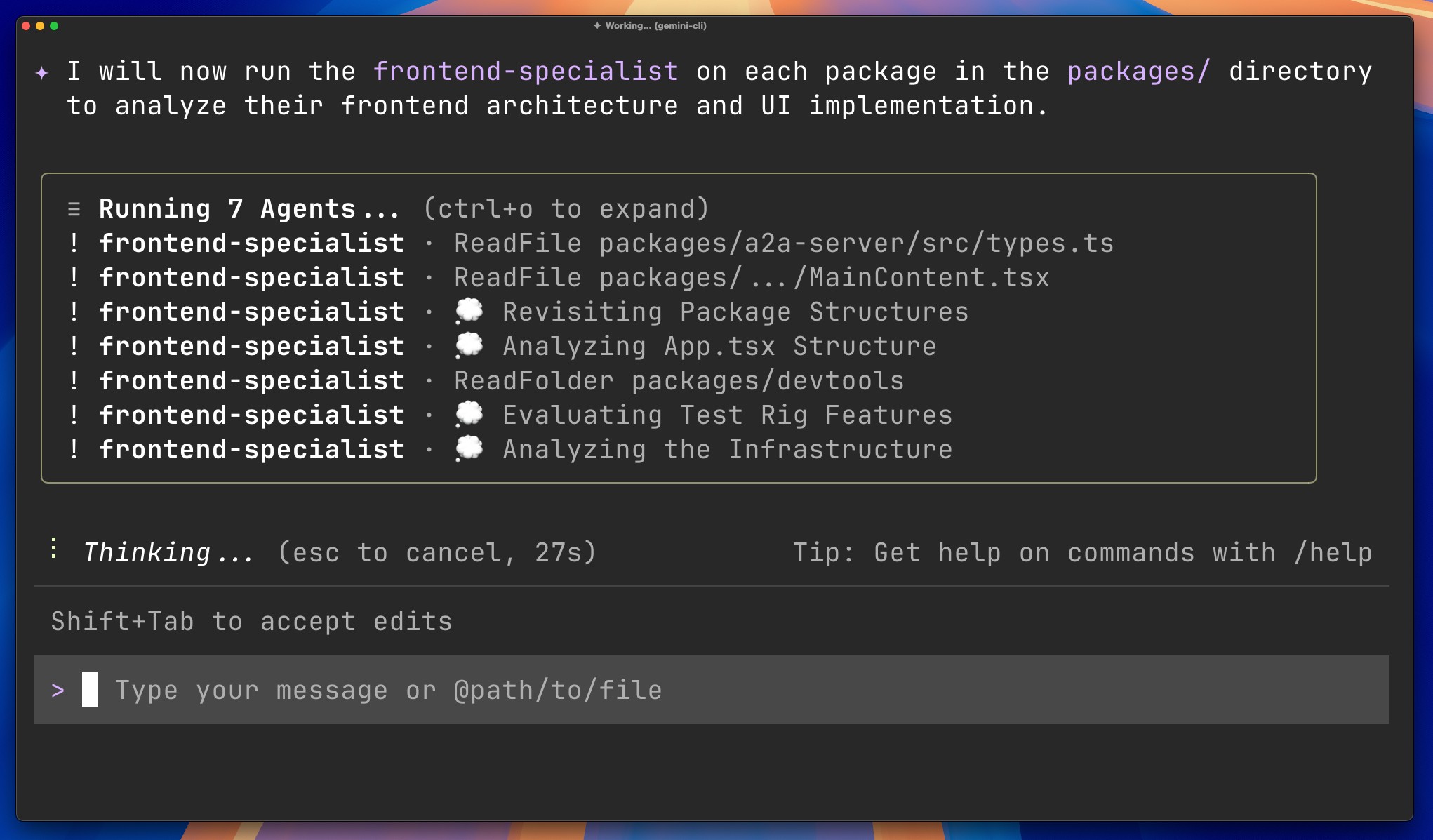

Google has introduced subagents into the Gemini CLI, turning the traditional terminal into a multi-agent dispatch centre.

Developers can now summon specialised expert agents straight from the terminal. They use a simple `@agent` syntax to delegate work. You build an agent, give it a specific job, and trigger it to run in parallel with your primary coding session.

Feed an AI too many files, and it suffers from what Google accurately identifies as context rot. The model forgets earlier instructions, starts hallucinating variables, and generally loses the plot entirely.

Engineers working on legacy enterprise systems know this pain. They spend hours priming an algorithm with architectural rules, only to watch it generate conflicting logic ten prompts later. As context windows stretch into the millions of tokens, the noise drowns out the relevant data.

Subagents fix this by isolating the context windows. Each subagent gets exactly the data it needs to execute a high-volume task. The primary session remains fast and stays focused on the overarching goal.

When a developer needs a database schema refactored, they do not ask the main AI to do it. They type `@db-agent` and offload the work. The subagent spins up in its own isolated environment, reads the relevant files, executes the code generation, and returns the finished product to the primary session. The main model never has to clog its memory with irrelevant backend logic while trying to help the developer write frontend user interfaces.

Microservices for AI

Command line interfaces have barely evolved since the days of Bash and Zsh. Text goes in, and text comes out. Now, the CLI hosts an entire virtual engineering department.

Engineering teams write simple Markdown files to customise these subagents. You define the agent’s expertise, its boundaries, and its execution environment. You give it a persona and a strict set of constraints. When the primary Gemini CLI encounters a problem requiring that specific expertise, it delegates the work automatically or waits for the manual `@agent` trigger.

Security teams will also appreciate this modular approach. Markdown files provide a clean, readable audit trail. A security engineer can open the configuration file and see exactly what permissions a specific subagent holds. This aligns with the principle of least privilege; if an agent is only configured to read the UI components folder, it cannot accidentally rewrite the core database configuration.

Delegation happens in parallel. An engineer can trigger three different subagents to tackle three different background tasks simultaneously. One agent updates API documentation, another writes unit tests for a new payment gateway, and a third scans the codebase for deprecated libraries. The human developer sits at the center of this web, orchestrating the execution and reviewing the pull requests.

An ecosystem moving toward orchestration

This week, OpenAI updated its Agents SDK with native sandbox execution and a model-native harness. Their goal is identical: helping developers build secure, long-running agents that can traverse files and tools autonomously.

While OpenAI is attacking the problem from the software development kit layer, Google is embedding the solution natively into the terminal.

The terminal remains the developer’s undisputed home. Embedding intelligent orchestration natively into the CLI removes friction. It keeps engineers in their flow state without forcing them to toggle between web browser windows and code editors. Competing platforms must offer similar delegation protocols or risk obsolescence.

Decomposing complex engineering tasks into specialised subagents is how you ship software at scale. Deterministic execution is replacing probabilistic guessing. You force the AI to use specific tools in a specific order, rather than hoping it infers the right path from a vague instruction.

The heavy lifting of software development is moving to the background, executed by specialised agents running parallel inside the terminal. Human engineers will define the boundaries, write the Markdown constraints, and approve the final pull requests.

See also: HubSpot just fixed one of its most frustrating developer problems

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is part of TechEx and is co-located with other leading technology events including the Cyber Security & Cloud Expo. Click here for more information.

Developer is powered by TechForge Media. Explore other upcoming enterprise technology events and webinars here.