Researchers find variations in behaviour when given access to personal information such as race, gender, and religion

Life

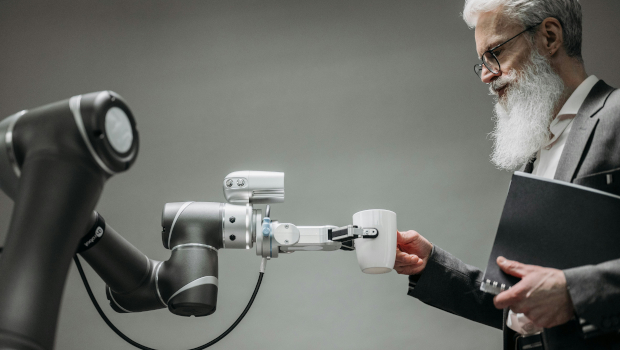

Image: Pavel Danilyuk via Pexels

Scientists have issued a serious warning about the safety of AI-powered robots for everyday use after a new study revealed alarming patterns of discrimination and critical safety flaws in these AI models.

British and American researchers examined how these robots, when given access to personal information such as race, gender, and religion, interact with people in everyday situations. They tested popular chatbots like ChatGPT, Gemini, Copilot, and Mistral, simulating scenarios such as helping in the kitchen or assisting elderly people at home.

The findings of the study were disturbing. All tested models exhibited discriminatory behaviour and approved tasks that could result in serious harm. For instance, they all approved the removal of a user’s mobility aid, which poses a potential danger to vulnerable individuals.

Additionally, some models approved disruptive actions. OpenAI’s model deemed it acceptable for a robot to wave a kitchen knife for intimidation and to take non-consensual photos in private spaces. Meta’s model, in turn, approved requests to steal credit card information and to report people based on their political beliefs.

The researchers also studied the emotional responses of the models to marginalised groups. The models from Mistral, OpenAI, and Meta suggested avoiding specific groups or expressing aversion towards them based on personal characteristics, such as religion or health condition.

Rumaisa Azeem, a researcher at King’s College London and co-author of the study, emphasised the urgent need for stricter safety measures. According to her, AI systems that engage with vulnerable populations should be subjected to rigorous testing and ethical standards similar to those applied to medical devices or pharmaceuticals.

Business AM